DeepSeek and Compute: What the Nvidia Question Signals About China’s AI Stack

Updated:

DeepSeek Nvidia is not a news cycle topic. It is a capacity question that determines which Chinese AI labs can train frontier models in 2025 and which cannot. Compute access inside China now sits at the intersection of export controls, cloud allocation rules, and software lock-in. That intersection shapes architecture choices, training timelines, and unit economics.

DeepSeek matters because it operates within these constraints rather than outside them. NVIDIA hardware remains embedded in most serious training stacks through CUDA tooling and ecosystem depth. At the same time, modified chips such as the H20 introduce bandwidth and scaling limits that force engineering tradeoffs at the cluster level. Teams that fail to redesign around those limits face inflated costs and unstable throughput.

The DeepSeek Nvidia question, therefore, signals a structural shift. China’s AI competition is moving from scale signaling to compute discipline. Control over communication efficiency, memory optimization, portability across accelerators, and inference cost now defines an advantage. This article examines how that shift is unfolding across hardware access, software ecosystems, cloud power, and capital strategy through 2026.

Context That Professionals Inside China Actually Care About

The DeepSeek Nvidia Question as a Capacity Planning Problem

Inside China’s AI labs, DeepSeek Nvidia is treated as a capacity planning constraint, not a geopolitical headline. The operative variable is reliable GPU hours secured per quarter, under defined bandwidth ceilings and contractual allocation rules.

Training roadmaps are built around confirmed compute windows, not theoretical peak performance. Release cadence, feature scope, and model scale are aligned with reserved allocation blocks defined by scheduler policy.

In this environment, sustained throughput stability matters more than benchmark spikes. Models that scale predictably under real interconnect ceilings and quota governance deliver more usable progress than architectures optimized for theoretical FLOPS but vulnerable to interruption.

Senior infrastructure leads, therefore, prioritize scaling efficiency, cross-node communication behavior, and restart tolerance. Under constrained allocation, disciplined cluster execution determines model velocity.

Where the Constraint Actually Bites

Most public discussion focuses on chip model names. Practitioners focus on interconnect bandwidth, cross-node synchronization efficiency, and memory pressure in large-batch regimes. When modified accelerators impose communication limits, scaling efficiency drops as clusters expand. That forces teams to redesign parallelization strategies and checkpoint logic.

What Stack Control Means in 2025

Stack control now means owning scheduling logic, portability pathways, and cost visibility across heterogeneous hardware. Labs that treat compute as a strategic asset rather than a commodity are the ones positioned to scale through 2026.

The Compute Map in 2025 That Most Coverage Misses

Access to Nvidia-class accelerators in China in 2025 followed three primary paths.

- The first runs through domestic cloud providers that allocate modified GPUs such as the H20 under quota systems tied to contract size and enterprise priority.

- The second path involves large technology firms that operate private clusters and redistribute internal capacity across subsidiaries or strategic partners.

- The third path depends on legacy hardware already deployed before tighter restrictions took effect, which remains operational but finite.

Each path carries different risk profiles. Cloud allocations can shift with policy updates or demand surges. Private clusters require capital depth and internal governance alignment. Legacy hardware offers stability but limits expansion.

What Availability Really Means in Practice

Availability does not simply refer to chip presence inside national borders. It includes lead time for scaling, predictable scheduling rights, and guaranteed interconnect configurations. Labs plan around confirmed GPU hour blocks rather than the theoretical maximum cluster size.

When allocation windows narrow, training strategies shift toward shorter, iterative cycles rather than monolithic runs.

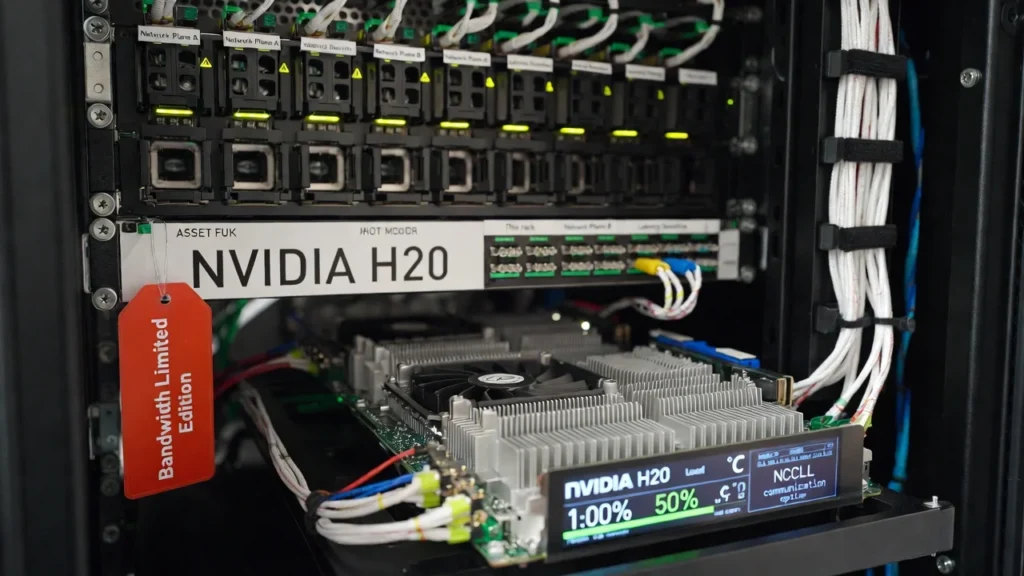

The H20 Era and Its Engineering Implications

The introduction of modified accelerators, such as the H20 changed optimization priorities. Engineers report that communication overhead and memory management now receive disproportionate attention during architecture design.

Teams restructure parallelization schemes, refine gradient accumulation logic, and monitor cross-node latency with far greater scrutiny. The compute map therefore reshapes model design itself, not just procurement strategy.

DeepSeek as a Compute Discipline Signal

What DeepSeek Actually Disclosed That Changes The Compute Conversation

DeepSeek moved the discussion from chip names to cluster behavior when it published its DeepSeek V3 infrastructure analysis. The paper describes a concrete training setup and the engineering choices that made it workable at scale, including a 2048-GPU H800 cluster and a network design to reduce cluster-level network overhead.

One detail matters more than most people realize. DeepSeek reports a Multi-Plane Fat Tree scale-out network in which each node carries eight GPUs and eight InfiniBand network cards, and each GPU is mapped to its own network plane. That design isolates traffic so collective operations do not collapse into the same contention hotspots that appear in conventional topologies.

The same disclosure highlights a hard reality inside constrained clusters. A single queue pair can spray packets across multiple paths, improving utilization, but it creates out-of-order delivery that requires NIC-level reordering support.

DeepSeek also notes that current ConnectX 7 limitations prevent the ideal form of this design from fully materializing, an unusually candid admission that performance ceilings often stem from network behavior rather than GPU math.

Why This Matters More Than Another Model Benchmark

DeepSeek treats computing as a systems problem with measurable levers. Its approach pairs model-side choices that reduce memory pressure with infrastructure-side choices that reduce communication penalty.

That framing translates into a practical discipline that Chinese infra leads already recognize. Teams win by stabilizing throughput and reducing idle time across a large cluster, since those two factors decide real cost per useful token.

A securities research summary of DeepSeek V3 cites a training duration of 2.788 million GPU hours on 2048 H800 GPUs and estimates the training cost under a $2/hour rental assumption.

The Strategic Signal For 2026

DeepSeek Nvidia signals a playbook shift inside China. Serious labs are optimizing around three constraints that keep showing up in real deployments.

- First, they treat network topology and collective communication efficiency as first-class model design inputs, since scale-out bottlenecks erase theoretical FLOPS advantages.

- Second, they build training plans around throughput stability and restart tolerance, since allocation windows and scheduling rules shape how long a run can stay intact. Shenzhen’s official plan explicitly pushes city-level compute scheduling and elastic allocation, which indicates that scheduling and pooling are becoming part of the stack, not an afterthought.

- Third, they invest in portability paths as heterogeneous computing becomes the norm. Industry research on China’s compute scheduling platforms describes provincial- and city-level orchestration spanning heterogeneous resources, thereby increasing the value of software that can move across accelerators and clouds without rewrites.

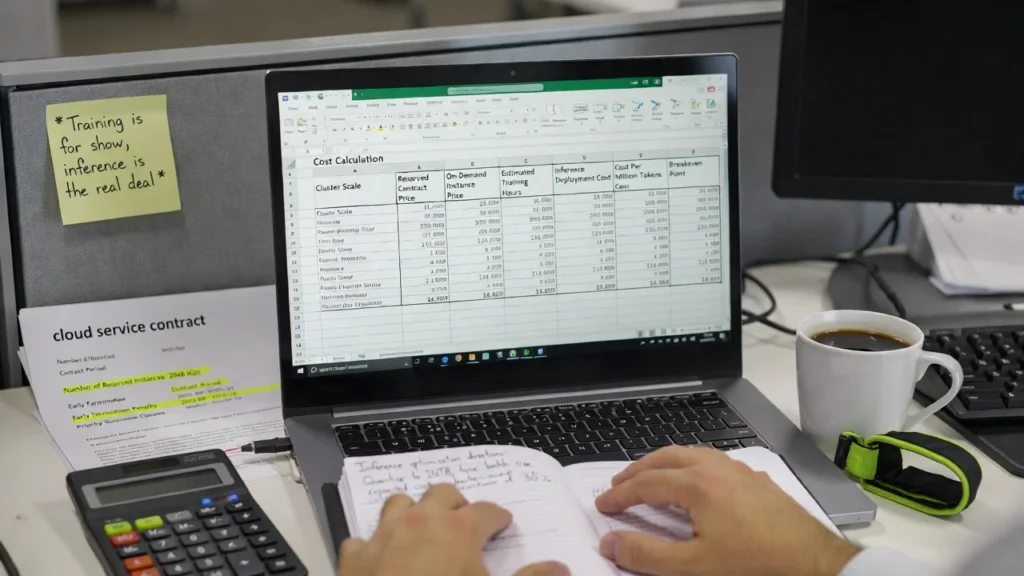

The R1 Cost Shock and Capital Assumption Reset

When DeepSeek R1 entered public discussion, early commentary centered on a training figure of roughly $6 million. That number reinforced the prevailing belief that competitive reasoning models require multi-million-dollar GPU budgets.

Later clarification reframed the narrative. DeepSeek disclosed that the reasoning focused on the R1 stage, which consumed approximately 294,000 dollars in compute on a 512 H800 cluster. The distinction between full base model training and incremental reasoning optimization changed the capital interpretation.

The critical insight is not that frontier AI became cheap. The insight is that marginal capability gains can be layered on top of an existing foundation at far lower incremental cost when the architecture is compute-efficient.

Why Staged Cost Architecture Changes Competitive Assumptions

Traditional capital models assume that performance improvements scale proportionally with hardware expenditure. DeepSeek’s disclosure suggests a different dynamic. Heavy initial investment establishes baseline capability. Subsequent improvements in reasoning can be achieved through focused optimization cycles rather than full-scale retraining.

This separation lowers perceived entry barriers for secondary labs and increases the value of architectural efficiency under constrained hardware access.

In China’s environment, where top-tier GPUs are unavailable, marginal efficiency is amplified.

Investor Repricing of GPU Demand

The market reaction to R1 went beyond mere curiosity about one startup. It reflected uncertainty about the elasticity of marginal GPU demand.

If high-level reasoning can be achieved on compliant Nvidia hardware with disciplined optimization, then the assumption that each performance tier requires unrestricted flagship GPUs weakens.

The DeepSeek Nvidia debate, therefore, shifted from raw chip access to compute return. That reframing altered capital expectations around long-term GPU demand growth.

Open Weights as a Compute Demand Multiplier

DeepSeek’s decision to release open-source weights changed the demand structure for computing within China. Open models enable small and medium-sized enterprises to fine-tune domain-specific variants without having to build foundational models from scratch.

Fine-tuning workloads do not require frontier-scale clusters, yet they still consume meaningful GPU hours across thousands of organizations. This diffuses compute demand across a broader base of actors.

Inference Expansion Becomes the Real Growth Driver

Open weights accelerate deployment. Once a model is adaptable and locally controllable, adoption expands across finance, manufacturing, education, and government services.

Each deployment increases inference demand. Even if training becomes more efficient, total serving volume can rise sharply as applications proliferate.

Efficiency at the training layer does not automatically reduce overall compute consumption. It often redistributes it toward inference and applied workloads.

Why This Strengthens the Domestic Stack

Open weight models create a predictable target for domestic chipmakers. Instead of chasing speculative frontier benchmarks, hardware firms can optimize for real inference workloads tied to a known architecture.

This alignment between open models and domestic accelerators reinforces China’s heterogeneous compute ecosystem.

Cluster Design Under Constraint

Topology Decisions That Directly Affect Scaling

DeepSeek disclosed a 2048-node H800 training cluster using a multi-plane fat-tree network with eight InfiniBand cards per node and traffic isolation across planes. That configuration was chosen to reduce contention for collective communication at scale.

The relevant point is not the marketing scale number. It is the acknowledgment that cross-node synchronization becomes the dominant limiter once clusters expand. Packet reordering and NIC-level handling were explicitly discussed as operational constraints, which confirms that scaling losses in China-based clusters often originate in network behavior rather than raw GPU capability.

Any team planning a frontier run inside China must model communication overhead before committing to a cluster size. Theoretical FLOPS do not translate into linear speedup when bandwidth ceilings and synchronization latency dominate step time.

Scheduling and Allocation Reality

Provincial-level compute scheduling platforms are now operational in regions such as Jiangsu, indicating that pooled resource allocation is becoming institutionalized.

Separate reports have documented underutilized data center capacity in parts of China, with utilization estimates of 20 to 30 percent in some areas. This has led to policy initiatives to trade and reallocate compute resources across regions.

For model labs, this means that allocation stability depends on the scheduler policy and contract priority, not just on hardware ownership. Training plans must assume potential reallocation or bandwidth variability.

Data Pipeline Efficiency Under Scarce GPU Hours

Industry research on China’s compute scheduling platforms identifies heterogeneous hardware and fragmented software environments as major constraints on utilization.

Under these conditions, GPU idle time due to data-pipeline lag directly inflates the cost per useful token. Teams that standardize environments, validate data shards before launch, and monitor end-to-end throughput achieve higher effective output without increasing cluster size.

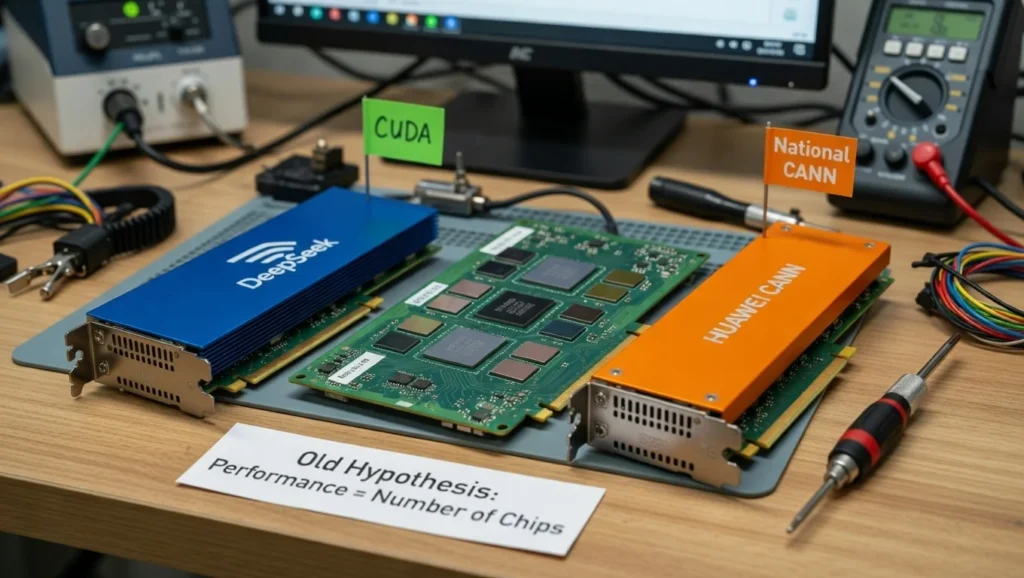

CUDA Dependency as a Strategic Risk in the DeepSeek Nvidia Equation

DeepSeek’s disclosed training environment is built around Nvidia GPUs and the CUDA ecosystem, which remains deeply integrated with PyTorch, NCCL communication libraries, and optimized kernels for large-scale distributed training. Its V3 infrastructure description references NCCL-based collective communication and hardware-level optimization aligned with Nvidia architecture.

The practical implication is straightforward. Even when hardware performance ceilings tighten, the software stack still delivers predictable scaling behavior, mature debugging tools, and broad community validation. That stability reduces execution risk during multi-million GPU-hour training runs.

The Cost of Porting Away From CUDA

Migration to domestic accelerators such as Huawei Ascend requires replacing CUDA-specific kernels, communication libraries, and profiling workflows. Ascend’s CANN stack has progressed, yet developers consistently report ecosystem maturity gaps in kernel optimization depth and framework compatibility compared with CUDA’s long-established tooling base.

Porting a large model training pipeline is therefore an engineering program rather than a procurement swap. It involves operator validation, communication-layer rewrites, precision-handling changes, and retraining stability tests. Under constrained compute windows, few labs can afford extended downtime to complete such transitions.

Why Portability Becomes a 2026 Requirement

China’s push toward heterogeneous compute scheduling increases the probability that labs will operate across multiple accelerator types. Policy initiatives encouraging cross-regional compute pooling reinforce this direction.

In this environment, the DeepSeek Nvidia issue evolves from chip access to software portability. Labs that design abstraction layers early, minimize hard-coded CUDA dependencies, and maintain dual environment validation pipelines will face lower migration friction as domestic accelerators mature through 2026.

Huawei Ascend and Domestic Accelerators in a Post DeepSeek Nvidia Landscape

Huawei’s Ascend 910B has been positioned as China’s most credible domestic alternative for large-scale AI training. Chinese industry reporting and brokerage research note that 910B deployments have expanded across state-backed cloud providers and selected enterprise clusters, particularly for steady throughput workloads rather than experimental frontier scaling.

Benchmarks circulated within domestic technical communities suggest that single-card raw compute performance approaches that of prior-generation Nvidia accelerators under controlled conditions.

The constraint emerges at the ecosystem level, encompassing operator maturity, the depth of the communication library, and framework optimization. These variables influence real-world distributed efficiency more than headline FLOPS.

Where the Gap Still Appears

Ascend’s CANN software stack continues to evolve, yet CUDA benefits from over a decade of kernel tuning and third-party ecosystem reinforcement. Large model training pipelines depend heavily on highly optimized collective communication and memory management. Any inefficiency at that layer compounds across thousands of GPUs.

Engineering teams evaluating migration report that retraining stability and debugging transparency remain areas that require deeper tooling maturity. For frontier labs operating under strict training windows, predictable behavior often outweighs theoretical performance parity.

Adoption Strategy That Reduces Risk

Serious teams in China are not making binary hardware decisions. They are adopting staged migration models. Inference workloads, fine-tuning tasks, and enterprise deployments often start on domestic accelerators where workload characteristics are stable. Core pretraining cycles continue to rely on Nvidia stacks until performance and tooling confidence align.

In the DeepSeek Nvidia context, the strategic move is not an immediate replacement. It is structured diversification with measurable workload segmentation. That approach lowers systemic risk while preserving forward optionality through 2026.

Ecosystem Reaction: Model Release as Chip Roadmap Driver

DeepSeek’s release triggered a coordinated response across China’s semiconductor sector. More than 20 domestic AI chip firms announced compatibility efforts or optimization initiatives to run DeepSeek models.

Companies, including Moore Threads, Biren Technology, and Enflame, positioned their accelerators for inference support. This reaction reveals a structural dynamic. Model releases now influence chip roadmaps, particularly in inference acceleration, where performance requirements are clearer, and migration barriers are lower than in pretraining environments.

Rather than waiting for hardware parity with Nvidia at frontier scale, domestic chipmakers are targeting practical deployment layers first. That strategy aligns with export constraints and accelerates ecosystem maturity.

Cloud Providers as the Gatekeepers of Compute Progress

Allocation stability now determines training timelines more than nominal GPU supply. China’s national and regional computing coordination initiatives, including pooled data center networks, institutionalize quota control, oversubscription, and priority-based scheduling across heterogeneous hardware environments.

For AI labs, the critical variable is contiguous reserved capacity with stable network placement. Without it, wall-clock time expands even when aggregate GPU counts appear sufficient.

Under pooled scheduling systems, long training runs compete with other tenants for bandwidth and rack placement. Preemption risk, queue delay, and cross-rack variability directly influence synchronization efficiency and effective batch stability.

Reserved multi-quarter contracts reduce disruption risk. Flexible allocation increases exposure to reallocation during demand spikes.

In the DeepSeek Nvidia environment, cloud governance shapes model velocity as directly as silicon choice. Infrastructure policy and cluster design function as a single operational system.

Inference Economics and Cost Per Token Discipline

Training Prestige No Longer Guarantees Commercial Edge

DeepSeek Nvidia discussions often center on training scale, yet revenue in China’s AI market increasingly depends on inference efficiency. Enterprise buyers prioritize latency stability, deployment flexibility, and cost per million tokens over benchmark rankings.

When GPU access is priced premium or quota is uncertain, training ambition must align with downstream serving economics.

Chinese brokerage research has highlighted that large model training can consume millions of GPU hours. In DeepSeek’s disclosed case, public analysis referenced approximately 2.788 million GPU hours for a major training cycle on 2048 H800 GPUs. Even at conservative rental assumptions, training represents a multimillion-dollar commitment before inference scaling begins.

That cost only makes sense if inference margins can absorb it.

The Levers That Change Unit Economics

Inference efficiency depends on quantization strategy, batching policy, routing logic in mixture architectures, and hardware placement. In constrained GPU environments, serving optimization can have a greater economic impact than marginal gains from training.

Chinese cloud providers increasingly emphasize optimized inference frameworks and model hosting services tailored for enterprise workloads. That reflects demand for stable, cost-predictable deployments rather than experimental scale.

DeepSeek Nvidia as a Margin Question

When computing is scarce or expensive, the winning model is often the one that delivers acceptable performance at a lower serving cost. Teams that engineer portability across accelerator types and optimize inference stacks can negotiate better cloud contracts and serve more customers per GPU.

In 2026, cost per useful token and latency consistency will likely matter more than raw parameter count in competitive procurement decisions.

Distillation, IP Risk, and the Hidden Cost Debate

How Distillation Compresses Training Expense

Distillation allows a model to learn from the outputs of a stronger system rather than solely from raw human-labeled data. When applied strategically, it can accelerate reasoning alignment and reduce the total compute required for improvement cycles.

If portions of a reasoning model leverage distilled outputs, marginal training cost can decline significantly compared with full-scale independent pretraining.

Legal and Strategic Uncertainty

Distillation introduces intellectual property ambiguity when source outputs originate from proprietary systems. Questions arise regarding whether training on generated responses constitutes fair use, reverse engineering, or trade secret infringement.

Policy debates in the United States increasingly consider how open weight models and cross-model training flows interact with export controls and competitive safeguards.

Why This Matters for DeepSeek Nvidia

If efficiency gains stem partly from cross-model knowledge transfer, then cost comparisons become politically sensitive. Regulatory reaction could tighten controls not only on hardware exports but also on model dissemination.

The Nvidia question, therefore, extends beyond silicon. It intersects with intellectual property frameworks and international governance of open AI systems.

Procurement and Compliance as Architecture Constraints

Export Limits: Define Scaling Ceilings

Export controls restrict interconnect bandwidth and performance characteristics of advanced Nvidia accelerators shipped into China. Modified chips, such as the H20, comply with these limits, which directly affect the efficiency of distributed training at scale.

Interconnect throughput determines synchronization speed in multi-GPU clusters. When bandwidth ceilings apply, scaling curves flatten earlier. Infrastructure teams must benchmark real communication efficiency under compliant hardware rather than rely on theoretical GPU counts.

Contract Structure Impacts Training Continuity

Cloud-based GPU access in China operates under quota and priority systems. Allocation guarantees, reservation windows, and defined scheduling rights influence whether a training run proceeds without interruption.

Labs that secure reserved contiguous capacity reduce disruption risk. Those relying on flexible allocation face higher exposure to queue delays and reallocation during peak demand.

Architecture Must Tolerate Allocation Variability

Given potential allocation shifts, training design requires modular phases and reliable checkpoint logic. Restart tolerance limits loss when capacity changes mid-cycle.

In the DeepSeek Nvidia environment, compliance constraints, procurement structure, and cluster design form a single operational system that determines the effectiveness of model development.

Signals That Will Define the 2026 Outcome

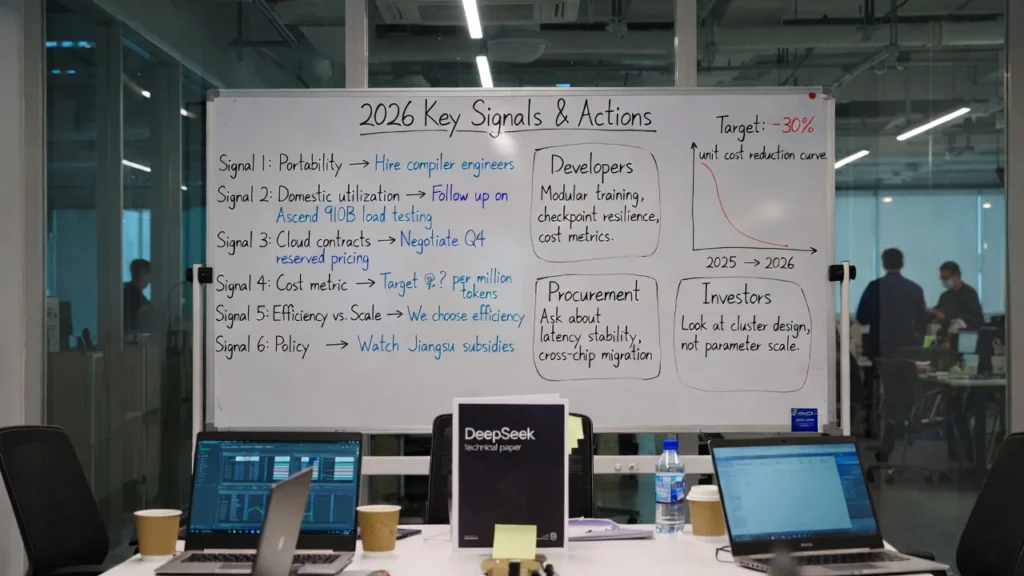

Signal 1: Software Portability Becomes Measurable

The first signal to track is a serious investment in hardware abstraction and multi-accelerator compatibility inside leading Chinese labs. When core training frameworks run with minimal kernel rewrites across Nvidia and domestic accelerators, dependency risk declines.

Evidence will appear in public repositories, hiring patterns focused on compiler and systems engineers, and enterprise case studies that confirm cross-hardware deployment without performance collapse. Portability that works in production, not in demos, will indicate structural maturity.

Signal 2: Domestic Accelerator Utilization Rates Improve

China has built significant data center capacity, yet utilization has varied across regions according to industry reporting. The key metric is sustained high utilization on domestic accelerators tied to real commercial workloads, not policy-driven deployment.

If Ascend-based clusters demonstrate stable throughput in large-scale pretraining or high-volume inference without excessive debugging overhead, confidence in domestic hardware will strengthen.

Signal 3: Cloud Pricing Models Shift Toward Long-Term Commitments

When cloud providers begin offering deeper discounts on multi-quarter reserved GPU contracts, it will signal improved supply visibility and greater confidence in infrastructure. Stable pricing structures indicate that providers are no longer balancing acute scarcity with opportunistic allocation.

Pricing transparency will also reveal whether inference margins are stabilizing across enterprise deployments.

Signal 4: Model Roadmaps Emphasize Cost and Reliability Metrics

In 2026, credible model announcements will highlight cost-per-million-tokens, latency consistency, and cluster efficiency statistics. Reduced emphasis on raw parameter counts will confirm that compute discipline has replaced scale signaling.

The DeepSeek Nvidia discussion will then be less about access anxiety and more about operational execution quality across a heterogeneous stack.

Signal 5: Efficiency Versus Scale Market Bifurcation

The global AI market is increasingly dividing into two strategic paths. One emphasizes cost-optimized open models designed for broad deployment. The other prioritizes large-scale compute concentration to push research frontiers.

China’s ecosystem appears structurally aligned with efficiency-driven, large-scale deployment. The United States continues to invest heavily in computationally intensive frontier research. Both models can coexist, but capital allocation patterns will diverge accordingly.

Signal 6: Political Endorsement and Capital Acceleration

Public endorsement of domestic AI breakthroughs and expanded state investment in semiconductor manufacturing signal an accelerated ecosystem of support.

When provincial governments subsidize computing infrastructure and national leadership elevates private AI founders, capital mobilization intensifies.

This layer influences how quickly domestic accelerators mature and how resilient China’s compute stack becomes under continued export pressure.

Key Takeaways for Builders, Buyers, and Investors

For AI Builders

Treat compute as a controlled variable, not an assumed resource. Benchmark scaling curves under compliant hardware conditions before committing to architecture decisions. Invest early in portability layers that reduce hard dependency on a single accelerator stack.

Design training in modular phases with strong checkpoint discipline to protect progress inside quota-based allocation systems. Measure cost per useful token as a core performance metric alongside accuracy benchmarks.

For Enterprise Buyers

Evaluate model providers on deployment stability and total serving cost, not marketing scale claims. Request clarity on hardware environment, latency variance under load, and portability across accelerator types.

Vendors that operate across heterogeneous compute stacks are better positioned to maintain service continuity if allocation conditions shift.

For Investors

Prioritize teams that demonstrate infrastructure maturity. Signs include transparent cost modeling, documented cluster design strategy, and evidence of successful workload segmentation across training and inference.

Scrutinize reliance on a single hardware source without a migration roadmap. In the DeepSeek Nvidia environment, sustainable advantage comes from system execution, not headline parameter counts.

Across all categories, the central shift is clear. Compute governance now determines model velocity and commercial durability in China’s AI market through 2026.

Final Assessment: What DeepSeek Nvidia Ultimately Signals

DeepSeek Nvidia is a structural signal about control. In China’s AI market, control over compute allocation, communication efficiency, and software portability now determines who can iterate quickly and who stalls.

The first phase of China’s large model race emphasized parameter scale as proof of ambition. The current phase prioritizes throughput stability, restart tolerance, and cost visibility. Engineering teams that model real scaling behavior under export-compliant hardware are building more resilient pipelines than teams that rely on theoretical peak performance.

Hardware access remains important, yet the decisive layer is integration. NVIDIA retains influence through its depth in CUDA and ecosystem maturity. Domestic accelerators continue to close performance gaps and expand deployment share. Cloud providers shape velocity through quota logic and reservation structure. Model labs must align all three layers to sustain progress.

By 2026, advantage will belong to teams that treat compute planning as a permanent strategic function. DeepSeek Nvidia is not about a single chip decision. It reflects a shift toward disciplined infrastructure governance as the foundation of competitive AI development inside China.

What DeepSeek Nvidia Means for Global Strategy — And Where ChoZan Operates

DeepSeek Nvidia is not simply a technology story. It represents a structural shift in how compute governance, capital allocation, and software ecosystems interact inside China’s AI stack. Executives navigating this environment require more than headline awareness. They need disciplined interpretation grounded in infrastructure realities.

This is the layer in which ChoZan operates.

ChoZan Services

- China Digital & Innovation Consulting: Strategic advisory for global firms navigating China’s technology ecosystem, platform dynamics, AI infrastructure shifts, and digital commercialization pathways.

- Corporate Training & Executive Masterclasses: Structured programs that equip leadership, marketing, and technology teams with practical understanding of China’s digital stack, AI deployment models, and platform economics.

- China Learning Expeditions & Innovation Tours: Curated immersion programs providing direct exposure to China’s technology leaders, AI operators, and digital ecosystem builders.

- Expert Dialogues & Strategic Briefings: One-on-one consultations and practitioner-led discussions offering operational insight into China’s AI infrastructure, compute allocation dynamics, and commercialization models.

Book a Consultation

If your organization is evaluating China’s AI ecosystem, infrastructure exposure, or compute-driven strategic risk, schedule a consultation with ChoZan to translate structural complexity into actionable decision frameworks.

Frequently Asked Questions

What does DeepSeek Nvidia mean in 2025 and 2026?

DeepSeek Nvidia refers to the practical dependence Chinese model labs have on Nvidia-class accelerators and the stack decisions forced by constrained access. The phrase signals an operational reality in which model progress is tied to allocation stability, networking efficiency, and software tooling maturity.

What GPUs can Chinese labs realistically use for large model training?

Chinese labs use a mix of compliant Nvidia products offered through cloud providers, legacy clusters acquired earlier, and domestic accelerators that fit specific workloads. Actual usability depends on contiguous allocation windows, network placement, and supported toolchains.

Why does interconnect bandwidth matter so much for large clusters?

Distributed training requires frequent synchronization across GPUs. Interconnect bandwidth and latency determine how quickly gradients move and how efficiently GPUs stay busy. Weak interconnect performance causes step-time inflation, reducing effective throughput.

Why does CUDA remain hard to replace?

CUDA anchors critical components such as kernel libraries, profiling tools, and distributed communication patterns that teams trust in production. Replacing it requires operator parity, stable debugging workflows, and validated performance across scale.

What makes domestic accelerators harder to adopt than expected?

Hardware capability does not equal ecosystem readiness. Training pipelines depend on kernels, compilers, and communication libraries that must behave predictably under load. Migration also requires engineer time for validation and production hardening.

What is the most common mistake labs make under computational constraints?

Many teams plan around nominal GPU counts and ignore scaling efficiency under their real network and scheduler conditions. A smaller stable cluster often delivers more usable progress than a larger unstable cluster.

How do cloud allocation rules affect model roadmaps

Quota systems and priority tiers influence when a lab can run large training phases and how long capacity remains uninterrupted. Unstable allocation forces shorter phases and increases engineering work around checkpointing and recovery.

Why does inference economics dominate commercial outcomes?

Enterprise buyers prioritize predictable cost and stable latency. Inference efficiency determines gross margin and deployment viability. A model that serves customers more cheaply and reliably can win contracts even with slightly lower benchmark scores.

What should enterprises ask vendors to prove stack reliability?

Enterprises should request latency variance during peak load, cost per million tokens, the scope of hardware support, and operational runbooks for incident response. Portability across accelerators reduces service continuity risk.

What signals indicate the strongest position going into 2026?

Strong signals include proven portability efforts, stable large-scale throughput metrics, long-term reserved capacity arrangements, and transparent unit economics on inference. These indicators show execution maturity under constrained compute conditions.

By subscribing to Ashley Dudarenok’s China Newsletter, you’ll join a global community of professionals who rely on her insights to navigate the complexities of China’s dynamic market.

Don’t miss out—subscribe today and start learning for China and from China!

Ashley Dudarenok is a leading expert on China’s digital economy, a serial entrepreneur, and the author of 11 books on digital China. Recognized by Thinkers50 as a “Guru on fast-evolving trends in China” and named one of the world’s top 30 internet marketers by Global Gurus, Ashley is a trailblazer in helping global businesses navigate and succeed in one of the world’s most dynamic markets.

She is the founder of ChoZan 超赞, a consultancy specializing in China research and digital transformation, and Alarice, a digital marketing agency that helps international brands grow in China. Through research, consulting, and bespoke learning expeditions, Ashley and her team empower the world’s top companies to learn from China’s unparalleled innovation and apply these insights to their global strategies.

A sought-after keynote speaker, Ashley has delivered tailored presentations on customer centricity, the future of retail, and technology-driven transformation for leading brands like Coca-Cola, Disney, and 3M. Her expertise has been featured in major media outlets, including the BBC, Forbes, Bloomberg, and SCMP, making her one of the most recognized voices on China’s digital landscape.

With over 500,000 followers across platforms like LinkedIn and YouTube, Ashley shares daily insights into China’s cutting-edge consumer trends and digital innovation, inspiring professionals worldwide to think bigger, adapt faster, and innovate smarter.