Kimi AI Explained: Capabilities, Architecture, and Enterprise Use Cases

Updated:

The AI assistant market in 2026 is crowded. You have your general-purpose chat tools, your research engines with citations, and your IDE native coding companions. Kimi AI from Moonshot AI takes a different path. It focuses on completing complex analytical tasks rather than simply generating conversational responses. This distinction matters more now than it did twelve months ago.

What Is Kimi AI? A Short Answer

Kimi AI is a large language model platform developed by Moonshot AI that focuses on reasoning, coding workflows, and large-scale document analysis. The system combines long-context processing, multimodal capabilities, and agent-based task execution to automate complex knowledge work.

Recent models such as Kimi K2 and K2.5 use a mixture-of-experts architecture designed to scale reasoning ability while controlling compute costs.

The latest generation also introduces Agent Swarm, which introduces an agent orchestration system designed for complex parallel workflows.

Key Takeaways

- Kimi AI is a reasoning-focused AI platform developed by Moonshot AI. It is designed to handle complex analytical tasks such as research synthesis, coding workflows, and large document analysis.

- The Kimi model family uses a Mixture of Experts architecture that activates only a portion of the network during inference to improve efficiency.

- Kimi K2.5 introduced multimodal reasoning and an Agent Swarm system that coordinates up to 100 parallel sub-agents for complex tasks. This approach speeds up large workflows by executing multiple steps simultaneously.

- Developers can access Kimi via APIs or open model deployments, making it easier to integrate the system into research tools, coding environments, and enterprise applications.

- Organizations often evaluate Kimi alongside models such as DeepSeek, Claude, and GPT, choosing tools based on the type of tasks they need to automate.

The Moonshot AI Story

Understanding the company behind the tool provides useful context. Moonshot AI was founded by Yang Zhilin, a former professor who worked on AI projects at major technology firms. The company has grown rapidly.

In early 2026, Moonshot sought to raise up to 1 billion dollars at an 18 billion dollar valuation, following a previous round that valued the company at ten billion dollars. Backers include Alibaba and Tencent.

Moonshot launched a product called Kimi Claw that utilizes the latest model and reportedly generated monthly sales exceeding the company’s total revenue for the entire preceding year. The company sells subscription plans for its chatbot and provides its underlying technology to enterprise clients.

What Is Kimi AI and Why It Matters in 2026

In 2026, the platform evolved from a chat interface into a system used for structured research workflows, coding tasks, and knowledge analysis.

The Core Idea Behind Kimi AI

The system was designed around one central capability. It can process very large volumes of information within a single reasoning session.

Early versions already supported extremely long context windows. The first public version accepted about 128000 tokens of input, which allowed entire research documents or large code repositories to be analyzed together.

Later models extended this ability further. The reasoning focused on the Kimi K2 Thinking model, which expanded the context window to roughly 256000 tokens while maintaining stable tool usage across complex tasks.

In practical terms, this means a user can upload multiple files and ask the system to analyze them together rather than splitting the task into smaller prompts.

The 2025 Model Shift

Moonshot AI accelerated development during 2025 with a series of new models.

The major release was Kimi K2, a mixture of expert architectures with about 1 trillion parameters, of which roughly 32 billion were active during inference.

This architecture allowed the system to scale reasoning power without dramatically increasing runtime cost.

The model also introduced stronger coding ability and agent-style reasoning. These improvements allowed the system to call tools, process large datasets, and execute multi-step workflows.

What Changed in 2026

In January 2026, Moonshot released Kimi K2.5, a multimodal reasoning model trained on roughly fifteen trillion text and visual tokens.

The model introduced several capabilities that changed how teams use the system:

- Multimodal reasoning: The model can analyze text, images, and visual documents within a single workflow.

- Agent swarm execution: Large tasks can be divided across many parallel agents working together.

- Research automation: The system can gather information, structure findings, and generate reports or presentations automatically.

Some configurations can coordinate as many as one hundred parallel agents and thousands of tool calls for complex workflows.

These features move the system closer to a digital research assistant than a traditional chatbot.

Why This Matters For AI Buyers

Many AI systems focus on conversational responses. That capability is easy to demonstrate but difficult to operationalize inside real business workflows.

Kimi AI focuses on a different layer of value.

The platform supports long context analysis, multi-step reasoning, and agent-driven automation. These capabilities allow teams to run complex workflows such as:

• analyzing hundreds of pages of research material

• reviewing large code repositories

• generating structured research reports

• automating knowledge-intensive internal processes

For companies evaluating generative AI in 2026, this distinction matters. Conversational AI answers questions. Agent-based reasoning systems complete complex knowledge tasks.

This is why discussions about Kimi AI chat or Kimi AI chatbot only describe the interface. The real capability lies in the reasoning system and workflow automation behind it.

Kimi AI Features and Model Architecture

Understanding Kimi AI features requires looking inside the model architecture rather than focusing on the chat interface. The Kimi model family was designed to balance three competing priorities that shape enterprise AI systems today. These priorities include reasoning ability, deployment efficiency, and developer flexibility.

Moonshot AI approached this challenge by building the Kimi models around a sparse architecture that scales capability without dramatically increasing runtime cost.

Mixture of Experts Architecture

The core design of the model family uses a Mixture of Experts architecture. Kimi K2 and K2.5 contain roughly one trillion total parameters, yet only about 32 billion are activated during each inference pass.

This structure allows the system to maintain the capacity of a very large model while limiting the compute required per request. Instead of processing the entire parameter set, the system routes each token through a small subset of specialized expert networks.

In the Kimi architecture, hundreds of experts are distributed across the network. During inference, only a small number participate in each reasoning step.

This routing approach reduces computational cost while preserving the knowledge diversity of a trillion-parameter model.

Multi-layer Attention Design

Another defining capability inside the architecture is the attention mechanism used to process large inputs.

The model uses multi-head latent attention and large-vocabulary embeddings to handle extremely long reasoning sessions. The attention system allows the model to maintain stable reasoning across extremely long inputs such as technical documentation or large codebases.

This capability is particularly important for research and engineering workflows that depend on large amounts of data.

Native Multimodal Capability

The latest generation of the model introduces multimodal reasoning.

Kimi K2.5 was trained on roughly 15 trillion tokens that combined visual and textual data during pretraining.

Because vision and language were trained together from the beginning, the model can interpret screenshots, diagrams, and interface designs alongside text instructions.

This capability enables several developer-oriented workflows.

Interface prototypes can be converted into working front-end code.

Technical diagrams can be translated into documentation.

Visual datasets can be analyzed together with written research material.

These features allow the system to operate as a hybrid reasoning and development assistant.

Agent Reasoning Infrastructure

Another important component of the architecture is the agent reasoning framework.

Kimi models support long reasoning chains that combine internal analysis with tool execution. Some configurations can perform hundreds of tool calls during a single problem-solving session.

This capability allows the system to perform extended tasks such as data research, document synthesis, and structured report generation.

The architecture also supports an Agent Swarm framework, where multiple specialized agents operate simultaneously during complex tasks.

Parallel reasoning allows large workflows to complete faster than sequential prompt execution.

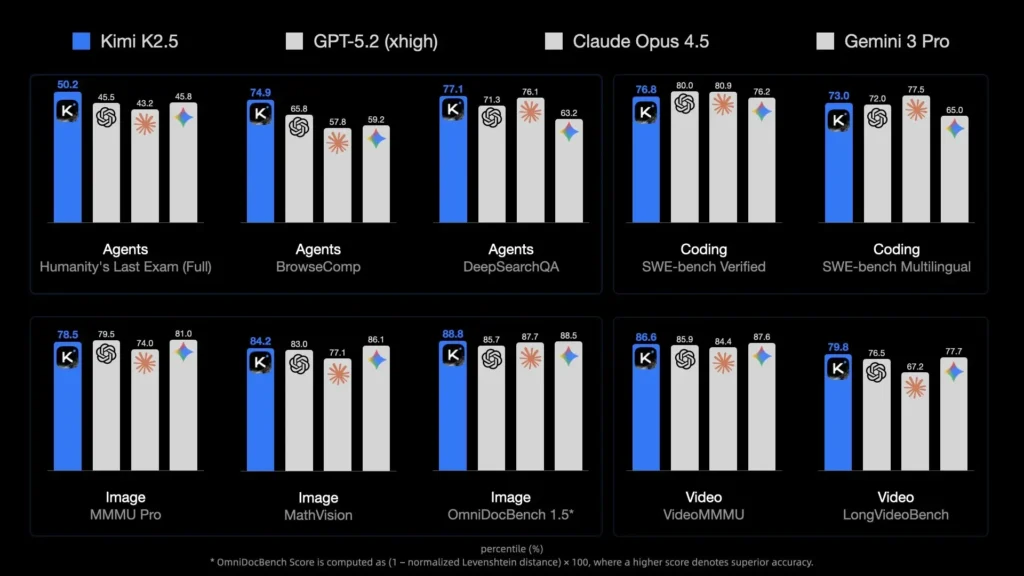

Benchmark Performance

Independent benchmarks provide a useful view of how the architecture performs in practice.

The reasoning focused on the Kimi K2 Thinking model, which scored 41 on the Artificial Intelligence Index, which is significantly above the median score of 27 among comparable open models.

The model also demonstrates strong performance across several technical benchmarks. Software engineering tasks such as SWE Bench Verified show scores above 70 percent. Mathematical reasoning benchmarks, such as the AIME 2025, show strong performance relative to many open models.

These results position Kimi among the most capable open-weight reasoning models currently available.

Deployment Flexibility

Another feature that attracts enterprise interest is deployment flexibility.

Unlike many frontier models that operate exclusively through closed APIs, Kimi models are available with open weights. Developers can deploy them locally, integrate them into private infrastructure, or fine-tune them for specialized tasks.

This approach gives organizations more control over data handling, infrastructure costs, and system customization. For teams that require on-premises deployment or internal model modification, this flexibility becomes a decisive advantage.

Agent Swarm: The Feature That Changes Workflows

The most discussed addition in K2.5 is Agent Swarm. Most AI models work like a single person doing everything. They read your question, think it through, and write back. One brain, one task, one answer at a time. Agent Swarm changes this architecture.

K2.5 can decompose a complex request into parallel subtasks and delegate them to dynamically instantiated subagents. The model can coordinate up to 100 of these agents and handle up to 1,500 steps in a single operation. Tasks that normally take hours can finish in minutes.

Each agent handled a specific part of the task while the orchestrator coordinated the results. The model then combined those outputs into a single structured report. In practice, this reduces the amount of manual prompting required to complete large research tasks.

The feature has limits. The quality of the output depends on how well you define the task. Vague instructions produce scattered results. But when you have a well-scoped project that benefits from parallel execution, the efficiency gains are real.

Kimi AI API and Developer Ecosystem

Moonshot AI provides several access paths to the model family. Teams can interact through the chat interface, connect applications through the API, or deploy the open weights inside private infrastructure.

This range of options allows the platform to function as a foundation layer for software products rather than only as a standalone assistant.

API Structure and Integration

The kimi ai api follows a structure that many developers already recognize. The service exposes endpoints similar to widely used AI APIs, which allows existing applications to connect with minimal architectural changes.

Developers can send prompts, upload files, trigger tool calls, and receive structured responses through standard requests.

The API supports several usage patterns.

- Chat requests: Applications can send conversational prompts and receive generated text responses.

- Tool execution: Developers can connect external tools so the model can retrieve data, run functions, or perform automated actions.

- Structured output generation: The system can return formatted data such as JSON, reports, or code modules.

This flexibility allows Kimi to operate inside customer support systems, research platforms, coding tools, or internal productivity software.

Deployment Options

Moonshot AI also supports multiple deployment approaches.

- Hosted API access: Developers connect to the official Moonshot infrastructure and pay for usage based on token consumption.

- Self-hosted deployment: Organizations can download model weights and run them inside private infrastructure.

- Hybrid deployment: Teams may run sensitive workloads locally while using the hosted API for large-scale tasks.

This range of options gives engineering teams more control over cost management and data governance.

Pricing Model

Another reason developers explore the platform is its pricing structure. The official pricing for Kimi K2.5 is about $0.60 per million input tokens and roughly $3.00 per million output tokens, depending on the provider.

This price level places the system among the more cost-efficient reasoning models available to developers.

For organizations running large document analysis pipelines or research automation systems, token costs can significantly affect the overall operating budget.

Why Developers Experiment With Kimi

AI platforms usually gain adoption through developer communities rather than marketing campaigns.

Moonshot AI lowered the barrier to experimentation in several ways.

- The API design aligns with existing developer standards.

- Open weights allow model customization.

- Multiple deployment options support different security requirements.

Because of these decisions, startups and research teams often test the model family when building applications that rely on automated analysis or complex reasoning tasks.

How Good Is Kimi AI for Enterprise Use

The question of how good Kimi AI is depends entirely on what you need it to do. For long context document workflows, the model excels. This capability allows teams to analyze large internal documents and research datasets within a single workflow.

For coding tasks, the performance is strong but specialized. The model handles HTML, React, and front-end work particularly well. It can transform ideas into fully functional, responsive products.

The visual coding capability, where you upload a design and get working code, saves hours of manual layout work. For backend or systems programming, you might prefer a tool more tightly integrated with your IDE. But for front-end work and prototyping, K2.5 delivers.

When people ask how good Kimi AI is for research, the answer is positive with one caveat. The model maps fields in layers. It organizes themes into categories, identifies subquestions, and suggests competing schools of thought. This structured overview saves hours compared to starting from scratch. But you must verify the outputs.

Kimi AI Alternatives and Market Position

Kimi operates in a competitive AI model landscape where several systems target similar tasks. Developers and organizations often compare it with other reasoning-focused models when choosing tools.

One comparison is with DeepSeek models, which emphasize efficient reasoning and lower operating costs. These systems gained attention in 2025 after releasing open models that performed strongly on reasoning benchmarks.

Another category includes models such as Claude and GPT, which focus on strong conversational ability and broad general knowledge. These tools are widely used for writing, research assistance, and customer support.

Kimi’s positioning is slightly different. The platform emphasizes long document analysis, coding support, and structured research tasks rather than conversational interaction alone.

Because of this focus, the model often appeals to developers and research teams who need systems capable of handling complex analytical workloads.

In practice, most organizations test several models before committing to production systems. Each platform performs best in different analytical or operational scenarios.

Learn From China’s Innovation Ecosystem with ChoZan

Technologies like Kimi AI are part of a broader shift in China’s digital economy. Platforms, AI systems, and consumer ecosystems often evolve faster in China than in other markets. Many global companies study these developments to understand how technology, commerce, and customer behavior are changing.

ChoZan is a Chinese digital transformation consultancy founded by China tech expert Ashley Dudarenok. The firm helps multinational companies and global technology teams understand China’s fast-moving digital ecosystem and apply those insights to their own strategies.

What ChoZan Helps Companies Do

- Digital China Advisory: ChoZan works with global brands and technology firms to translate China’s digital transformation into practical business strategies and market insights.

- Executive Briefings and Corporate Training: Leadership teams receive structured briefings and workshops on topics such as AI adoption, social commerce, platform ecosystems, and the future of digital retail in China.

- China Innovation Tours and Learning Expeditions: ChoZan organizes curated programs that enable executives to meet with Chinese technology companies and study digital innovation firsthand in the market.

- Research and Trend Analysis: Companies work with ChoZan to track emerging trends in AI, digital commerce, and consumer behavior across China’s technology ecosystem.

Work With ChoZan

If your team wants to understand how innovations like Kimi AI fit into China’s broader technology landscape, ChoZan provides research, advisory support, and executive learning programs designed for global leadership teams.

Book a consultation with ChoZan to explore how China’s digital innovation can inform your technology and strategy decisions.

FAQs about Kimi AI

1. What is Kimi AI used for?

Kimi AI is typically used for research synthesis, coding assistance, document analysis, and structured knowledge generation. The system is designed to help users process large datasets and produce outputs such as reports, code modules, and summaries.

2. Who created Kimi AI?

Kimi AI was developed by Moonshot AI, a Beijing-based artificial intelligence startup founded in 2023. The company focuses on building large language models designed for reasoning, coding, and automation workflows.

3. Is Kimi AI open source?

Several models in the Kimi ecosystem are released with open weights or developer access. For example, Kimi K2.5 is distributed with open model access, allowing developers to run it locally or integrate it into custom AI applications.

4. Does Kimi AI support multimodal inputs?

Yes. Kimi K2.5 can process multiple data types, including text, images, and visual documents. This allows the system to analyze diagrams, screenshots, or datasets together with written instructions in a single workflow.

5. Can developers integrate Kimi AI into software products?

Yes. Developers can connect applications to Kimi through APIs or run the model locally. This allows integration into coding assistants, research platforms, enterprise tools, or internal knowledge systems.

6. Is Kimi AI available globally?

Kimi AI services are accessible globally through web platforms and developer APIs. Many international developers experiment with the models for research automation, coding tools, and analytical applications.

7. What types of tasks does Kimi AI handle well?

Kimi performs well on analytical tasks that involve large amounts of information. Examples include research reviews, technical documentation analysis, code generation, and structured data extraction from complex datasets.

8. How is Kimi AI different from traditional chatbots?

Traditional chatbots mainly answer questions or provide conversational responses. Kimi models are designed to execute complex tasks such as research analysis, coding workflows, and automated document generation.

9. What industries are experimenting with Kimi AI?

Organizations in software development, finance, education, and research fields are experimenting with Kimi models. Many teams test the system for document analysis, coding assistance, and knowledge management workflows.

10. Can Kimi AI be used for automation workflows?

Yes. Developers often use Kimi to automate repetitive analytical tasks such as summarizing reports, generating documentation, or analyzing datasets. These workflows allow teams to reduce manual research and data processing work.

By subscribing to Ashley Dudarenok’s China Newsletter, you’ll join a global community of professionals who rely on her insights to navigate the complexities of China’s dynamic market.

Don’t miss out—subscribe today and start learning for China and from China!

Ashley Dudarenok is a leading expert on China’s digital economy, a serial entrepreneur, and the author of 11 books on digital China. Recognized by Thinkers50 as a “Guru on fast-evolving trends in China” and named one of the world’s top 30 internet marketers by Global Gurus, Ashley is a trailblazer in helping global businesses navigate and succeed in one of the world’s most dynamic markets.

She is the founder of ChoZan 超赞, a consultancy specializing in China research and digital transformation, and Alarice, a digital marketing agency that helps international brands grow in China. Through research, consulting, and bespoke learning expeditions, Ashley and her team empower the world’s top companies to learn from China’s unparalleled innovation and apply these insights to their global strategies.

A sought-after keynote speaker, Ashley has delivered tailored presentations on customer centricity, the future of retail, and technology-driven transformation for leading brands like Coca-Cola, Disney, and 3M. Her expertise has been featured in major media outlets, including the BBC, Forbes, Bloomberg, and SCMP, making her one of the most recognized voices on China’s digital landscape.

With over 500,000 followers across platforms like LinkedIn and YouTube, Ashley shares daily insights into China’s cutting-edge consumer trends and digital innovation, inspiring professionals worldwide to think bigger, adapt faster, and innovate smarter.