The conversation around Chinese large language models shifted in early 2026. It moved from speculative potential to demonstrable production reality. Zhipu AI, the Beijing-based startup valued at over six billion dollars after its January Hong Kong IPO, now sits at the center of this shift.

Enterprise teams globally are looking at the GLM model family not just as another open source alternative but as a legitimate infrastructure contender. The question is no longer whether the technology works. The question is how to evaluate it, where to deploy it, and what risks emerge when you do.

What Zhipu AI Is in 2026

Zhipu AI is a Chinese AI company built around the GLM model family. In 2026, the better way to read it is as a platform company with a growing enterprise focus, rather than a single-model story.

That shift matters because Zhipu is now trying to sell a fuller stack. The company is consolidating models, API access, agent products, and multimodal tools into a single commercial system. For buyers, the real question is no longer basic model capability. The real question is how well this stack performs inside actual business workflows.

Its market position also changed in early 2026. Zhipu raised HK$4.35 billion in its Hong Kong debut on January 8, 2026, which marked the company’s transition into a more public commercial phase. That gave it more visibility and more pressure to prove product traction.

One month later, Zhipu released GLM 5. Reuters described the launch as having stronger coding ability and longer agent task execution. That tells you where the company wants to compete. The pitch is moving toward applied work and automation use cases.

So, in practical terms, Zhipu AI in 2026 is a Chinese platform company using GLM as the base for a wider enterprise AI push. That is the most useful starting point for evaluating its products, fit, and risks.

How the GLM Model Family Is Structured

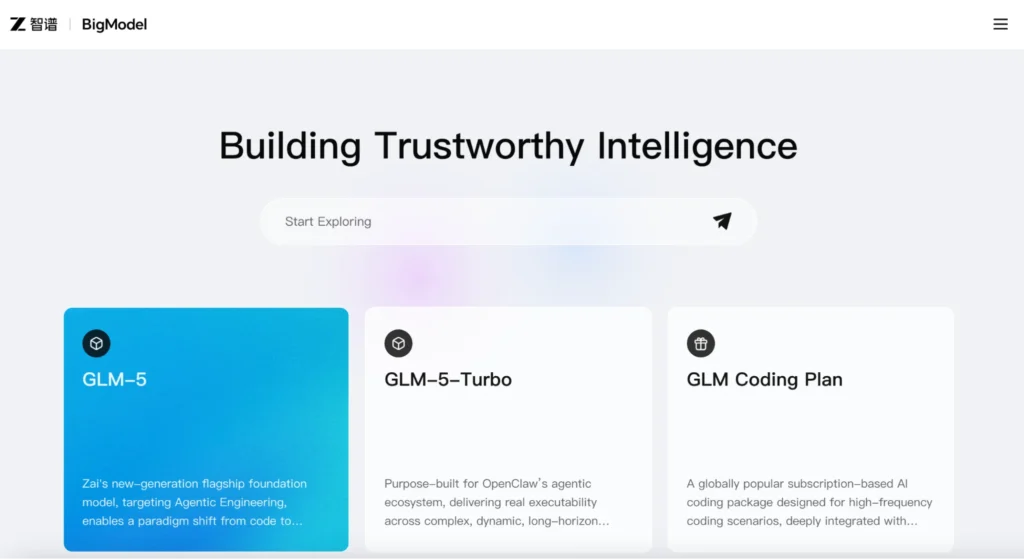

The GLM family now reads as a progression rather than a loose set of versions. Zhipu has been pushing the line toward a single goal: models that can reason, code, use tools, and carry out work across longer chains of action. Its own product material frames GLM 4.5 around unified reasoning, coding, and agent abilities, while GLM 5 is presented as the next step for complex systems engineering and long-horizon agent tasks.

That evolution matters because it gives the family a clear structure. GLM 4.5 opened the agent path in July 2025 as an open-source release aimed at intelligent-agent applications. It gave Zhipu a practical model lane for teams that wanted real deployment value, not only a flagship label.

By early 2026, the center of gravity had moved higher. GLM 5 became the flagship tier, with a 744-billion-parameter mixture-of-experts design, 40 billion active parameters per inference, 28.5 trillion training tokens, and a 200K context window. Those numbers matter because they support larger codebases, longer task chains, and a more stable state across multi-step execution.

How the Tiers Fit Together

GLM 4.6 sits in the middle as a refinement layer. Z.ai describes it as stronger in real-world coding, long context processing, reasoning, search, and agent work. It also pushed the context window to 200K, making the family more useful for longer working sessions.

GLM 4.7 then sharpened that path. Z.ai says it improved multilingual agentic coding, terminal-based tasks, and stable multi-step reasoning and execution. That makes it a bridge between the earlier open source agent line and the more ambitious GLM 5-tier.

At the top end of execution, GLM 5 Turbo adds a more specialized lane. Z.ai’s developer docs position it for tool invocation, timed tasks, persistent tasks, and long chain execution. That tells buyers the family is no longer organized solely around chat. It is organized around workload type.

So the skimmable reading is simple.

- GLM 4.5 opened the agent-oriented path.

- GLM 4.6 and GLM 4.7 refined long context, coding, and execution.

- GLM 5 became the flagship for deeper reasoning and longer task horizons.

- GLM 5 Turbo pushes the family further into execution focused workflows.

What Stands Out Technically and Commercially

The clearest signal is direction. Zhipu AI is pushing toward agent work, coding, and practical deployment, not just chat. That became sharper with GLM 5, which Zhipu launched in February 2026 as its flagship for coding and long-running agent tasks.

Price and access also stand out. Zhipu has not kept its story at the premium end only. In July 2025, it released GLM 4.5 as an open-source model for intelligent-agent applications, providing developers with a lower-friction path into the stack.

The product mix is another strong signal. The current stack spans GLM 5, visual models such as GLM 4.6V, and agent-oriented products such as AutoGLM. That tells buyers the company is building for broader workflow use, not one narrow interface.

Why this Matters

For evaluation, three things matter most.

- First, Zhipu is leaning hard into agent execution. So, tool use and multi-step performance matter more than polished chat output.

- Second, the open-source push around GLM 4.5 points to a commercial strategy built around adoption, not just prestige.

- Third, the platform already provides models, vision, and tools in a single stack. That can make testing and deployment simpler for teams that want continuity across use cases.

Access and Integration Paths

Zhipu offers buyers multiple entry points, which is useful because not every team starts at the same place. A business user can begin with z.ai or zhipu qingyan ai-style assistant products, while a developer team can move straight to the API layer. Larger organizations can approach the stack through MaaS and sales led integration.

The API path is the most practical route for technical evaluation. Z.ai’s developer docs support direct calls through cURL, the official Python SDK, Java SDK, and OpenAI-compatible SDK patterns. That lowers switching friction for teams that want to test zhipu ai api access inside existing workflows instead of rebuilding their stack from scratch.

There is also a product layer built around agents and the tools they use. AutoGLM sits in that lane for autonomous planning and execution, while products such as AutoGLM Phone show how Zhipu is extending model access into action-taking systems rather than stopping at chat. That matters for buyers who care more about workflow automation than prompt interfaces.

So the clean reading is simple. Zhipu offers three main paths: assistant products for fast exploration, API access for developer testing, and MaaS or agent tools for deeper deployment. That range makes the stack easier to pilot across different teams.

Product Ecosystem: Qingyan and Ying

Zhipu has expanded beyond raw model provision into application layers. Zhipu Qingyan AI serves as the primary conversational interface. It functions as a productivity tool for knowledge workers. It integrates document analysis, web browsing, and data visualization. This product demonstrates the “agent” capabilities of the GLM models, where the AI executes multi-step workflows rather than simply answering questions.

In the creative domain, Ying by Zhipu AI targets video generation. This tool reflects the industry trend toward multimodality. It allows marketing teams to generate short video clips from text prompts. Ying’s existence highlights a strategic pivot for Zhipu. They now offer end-to-end solutions from text generation to visual content creation. This consolidation appeals to marketing departments looking to reduce vendor sprawl.

Evaluation Checklist for Enterprise Adoption

Evaluating a large language model for enterprise use in 2026 requires a rigorous framework. A demo often looks perfect, but production workloads reveal cracks. Teams must assess four critical pillars before committing to Zhipu.

1. Quality and Reasoning Consistency

Generic benchmarks often fail to predict real-world performance. Teams should curate a “golden dataset” of internal queries. Test the zhipu AI GLM-4.5 model on these specific prompts. Look for consistency in logic.

- Does the model hallucinate when asked about obscure internal acronyms?

- Does it maintain a consistent persona over long conversations?

The zhipu qingyan ai interface offers a low-cost way to run these initial tests manually.

2. Latency and Throughput

Speed kills bad products. If an agent takes ten seconds to answer a customer query, the user abandons the session. Run load tests against the openapi.zhipu.ai endpoint. Measure the Time to First Token (TTFT) and the tokens-per-second rate.

These metrics directly impact user experience. Latency often varies based on server load. Negotiate Service Level Agreements (SLAs) that guarantee throughput during peak business hours.

3. Total Cost of Ownership

API pricing models can deceive. A low cost per token might result in higher bills if the model requires lengthy prompts to perform well. Analyze the prompt-to-completion ratio. The zhipu ai api pricing structure has become increasingly competitive.

It now undercuts many Western competitors on equivalent Chinese-language tasks. Factor in the engineering cost of integration. The API’s OpenAI compatibility reduces engineering hours, resulting in significant savings.

4. Safety and Governance

This pillar demands the most scrutiny. Chinese regulations require strict adherence to content safety standards. The GLM models include built-in safety layers to comply with local laws. Enterprise buyers must understand how these safety filters work.

Overly aggressive filters can block legitimate business queries. Under-sensitive filters risk regulatory fines. Ask the vendor for the “refusal rate” in standard business vocabulary.

Ensure the zhipu AI safety alignment matches your industry vertical. Financial and healthcare sectors require tighter controls than consumer retail.

5. Data Handling and Compliance Posture

Data sovereignty remains a top concern for multinational corporations operating in China. Zhipu AI operates entirely within Chinese data centers.

Enterprise buyers must ask specific questions.

- Where does the inference occur?

- Does Zhipu AI log prompt data for model training?

Most enterprise agreements allow for “zero data retention” or “isolated instance” deployments. This ensures that proprietary data does not leak into the foundation model weights.

The Competitive Landscape: Zhipu vs. The Field

The Chinese AI market is crowded. Buyers frequently weigh zhipu ai vs deepseek. DeepSeek has gained a reputation for cost efficiency and strong coding abilities. Their open-source strategy appeals to teams that want to self-host.

Zhipu takes a more balanced approach. While they offer open-weight models, their value proposition centers on the managed zhipu ai api service. Zhipu generally outperforms DeepSeek on multimodal tasks and complex Chinese-language understanding.

DeepSeek often wins on raw price and coding benchmarks. The choice depends on the use case. Choose DeepSeek for self-hosted coding assistants. Choose Zhipu for customer-facing agents that require nuanced communication and multimodal features.

Other competitors include Baidu’s Ernie Bot and Alibaba’s Qwen. Baidu integrates deeply with its search ecosystem. Qwen leverages the Alibaba Cloud infrastructure. Zhipu AI distinguishes itself through neutrality. It does not favor a specific cloud platform. This makes it the preferred choice for enterprises running hybrid cloud strategies.

A Practical Pilot Plan: What to Test First

Do not attempt a company-wide rollout immediately. Start with a focused pilot. Identify a single workflow with measurable outcomes. An internal knowledge base search is an ideal starting point.

Phase 1: Ingestion

Feed company documentation into the system. Use zhipu qingyan ai’s retrieval capabilities, or build a custom RAG pipeline using the API. Measure the accuracy of retrieval.

Phase 2: Integration

Connect the openapi.zhipu.ai endpoint to an internal Slack or DingTalk bot. Allow a small group of employees to test it. Collect feedback on answer quality.

Phase 3: Evaluation

Compare the results against human performance. Does the AI reduce the time employees spend searching for information? Use the evaluation checklist discussed earlier. Pay close attention to the refusal rate. If the model refuses to answer standard HR questions due to safety filters, it fails the pilot.

Phase 4: Scaling

Once the pilot succeeds, expand to customer-facing applications. Start with low-risk tasks, such as order tracking or FAQ responses. Monitor the zhipu ai api usage logs daily. Look for patterns in user frustration.

Future Outlook for 2026 and Beyond

Zhipu AI’s trajectory points toward agentic workflows. The industry has moved beyond simple chat. The GLM models now function as the brains for autonomous agents. These agents can browse the web, interact with software APIs, and execute complex tasks.

Zhipu invests heavily in tool-use capabilities. The GLM 4.5 zhipu AI release focuses on the accuracy of function calls. This allows the model to interact with internal enterprise software, such as CRM systems or ERP databases. Enterprise buyers should prepare their data architecture for this shift. Clean APIs and structured data allow the models to operate effectively.

The video generation capabilities of Ying by Zhipu AI will likely integrate more tightly with the text models. Marketing teams should pilot workflows in which a text prompt generates a script and instantly creates a companion video. This convergence of modalities drives efficiency in content marketing.

Put Zhipu AI in a Broader China Context

If this article has raised bigger questions for your team, ChoZan’s work is useful when you need context, not just content. Our work centers on helping companies understand how China’s technology, consumer, and digital systems are evolving in practice.

What ChoZan works on includes:

- China research and insights, including market research, consumer research, and marketing strategy support.

- Consulting and expert calls, including China business consulting, China digital transformation consulting, and on-demand expert calls.

- Keynotes and workshops, including China keynote speaking, digital transformation training, new retail and social commerce training, and marketing and social media training.

- Learning expeditions and immersion programs, including China Learning Expeditions, China Innovation Tour, China Market Immersion, and China Tech Tour.

- Trend watching and foresighting across China tech, consumer, innovation, retail, and digital marketing.

To explore what developments like this could mean for your business, book a consultation with ChoZan.

Frequently Asked Questions

1. What is the current pricing status for Zhipu AI models after the recent price increases?

Zhipu AI raised API prices twice in early 2026, with GLM‑5‑Turbo now costing roughly 80 percent more than GLM‑4.7. Enterprise contracts offer reserved capacity to mitigate volatility.

2. What is GLM‑5‑Turbo, and how does it differ from the base GLM‑5 model?

GLM‑5‑Turbo is a closed-source variant optimized for agent workloads like OpenClaw. It improves tool-call stability and multi-step execution while sharing the same 744-billion-parameter architecture.

3. What are the different thinking modes available in GLM‑5, and when should each be used?

Interleaved thinking reasons between tool calls. Preserved thinking maintains reasoning across turns for coding agents. Turn level thinking enables reasoning per request to balance cost and capability.

4. Can Zhipu AI models run on non-NVIDIA hardware, and what does that mean for deployment?

GLM‑5 was trained entirely on Huawei Ascend chips. Open weights can run on any compatible hardware, removing the dependency on Western supply chains for organizations subject to export restrictions.

5. What is AutoClaw and how does it differ from the standard API access?

AutoClaw is a one-click local installation of OpenClaw that runs entirely on local hardware. It pre-packages 50 skills for enterprises needing data privacy without sending prompts to external infrastructure.

6. How should enterprises handle the GLM Coding Plan capacity limitations?

Zhipu AI imposed daily quota caps due to demand. Enterprises should secure reserved capacity through contracts or use standard API access, which operates under separate limits.

7. What enterprise security features does Zhipu AI offer for OpenClaw deployments?

Claw for Enterprise Security provides role-based access, audit logs, encryption, and mandatory human approval for critical agent actions, enabling the safe, autonomous deployment of agents at scale.

8. What does the shift from model sales to digital workforce subscriptions mean for buyers?

Zhipu now bundles tokens with deployment support into monthly subscriptions. This simplifies budgeting but increases vendor dependency, shifting focus from raw compute to outcome-based pricing.

9. How can organizations test GLM models without committing to production scale?

The Z.ai platform offers free evaluation through OpenAI-compatible endpoints. Open weights on Hugging Face with quantization let teams test locally without usage fees.

10. What measurable results have enterprise customers achieved with Zhipu AI deployments?

Jidou Technology launched an in-vehicle payment agent using GLM. Find Steel achieved a 7 percent gross margin contribution from GLM-powered procurement automation, handling 30 billion tokens monthly.

By subscribing to Ashley Dudarenok’s China Newsletter, you’ll join a global community of professionals who rely on her insights to navigate the complexities of China’s dynamic market.

Don’t miss out—subscribe today and start learning for China and from China!

Ashley Dudarenok is a leading expert on China’s digital economy, a serial entrepreneur, and the author of 11 books on digital China. Recognized by Thinkers50 as a “Guru on fast-evolving trends in China” and named one of the world’s top 30 internet marketers by Global Gurus, Ashley is a trailblazer in helping global businesses navigate and succeed in one of the world’s most dynamic markets.

She is the founder of ChoZan 超赞, a consultancy specializing in China research and digital transformation, and Alarice, a digital marketing agency that helps international brands grow in China. Through research, consulting, and bespoke learning expeditions, Ashley and her team empower the world’s top companies to learn from China’s unparalleled innovation and apply these insights to their global strategies.

A sought-after keynote speaker, Ashley has delivered tailored presentations on customer centricity, the future of retail, and technology-driven transformation for leading brands like Coca-Cola, Disney, and 3M. Her expertise has been featured in major media outlets, including the BBC, Forbes, Bloomberg, and SCMP, making her one of the most recognized voices on China’s digital landscape.

With over 500,000 followers across platforms like LinkedIn and YouTube, Ashley shares daily insights into China’s cutting-edge consumer trends and digital innovation, inspiring professionals worldwide to think bigger, adapt faster, and innovate smarter.