China’s AI market in 2026 is consolidating around teams capable of executing at scale, a shift closely tied to broader China tech trends and AI ecosystem shifts that favor infrastructure and deployment over experimentation. StepFun AI stands out among the “Six Tigers” because it focuses on multimodal foundation models and real deployment rather than traffic growth or API commoditization.

Founded in April 2023 by Jiang Daxin, with CTO Zhu Yibo and chief scientist Zhang Xiangyu, the company built on a clear roadmap: unimodal to multimodal to unified systems that can understand and generate across text, vision, and audio.

This approach shows in its model stack. The Step series includes large language models, multimodal systems, and video generation models released in 2025, placing Stepfun alongside top Chinese tech innovators shaping AI development rather than typical API-driven startups.

Instead of packaging features first, Stepfun AI separates core models from applications, allowing enterprises to evaluate specific capabilities, such as video generation, without committing to a full platform.

Step Video T2V: Architecture, Performance, and Enterprise Deployment Limits

Technical Foundations

Step Video T2V is an open‑source text-to-video model released by Stepfun AI in February 2025. The technical report describes the model as having 30 billion parameters and as capable of generating videos up to 204 frames in length.

To make such a large model practical, the team designed a deep compression variational auto‑encoder (VAE), called Video‑VAE, that compresses the video latent space by 16 × 16 in the spatial dimensions and by 8 × in the temporal dimension. User prompts are encoded through two bilingual text encoders to support both Chinese and English input.

A diffusion transformer with three‑dimensional full attention denoises latent noise into video frames, and the training objective uses flow matching. After pre‑training, the model is fine‑tuned with direct preference optimization (DPO) to reduce artifacts.

Running Step Video T2V with a batch size of 1 at 544×992 resolution across 204 frames requires roughly 77 GB of peak GPU memory with flash attention optimization, and inference time is about 743 seconds across four GPUs.

This is not a model that runs on a laptop or a single consumer-grade card, which reflects China’s digital infrastructure advantage in AI deployment, where large-scale compute access is already embedded into the ecosystem. The official documentation recommends GPUs with 80GB of memory for optimal generation quality.

The combination of large parameters, a compressed latent space, and bilingual text encoding enables Step Video T2V to generate videos with rich motion dynamics and consistent content.

Step Video TI2V and Other Variants

Stepfun extended its video stack with Step Video TI2V, a model that generates short videos from a reference image and text prompt. Instead of creating scenes from scratch, it anchors generation to existing visual input, making it more relevant for brand and product use cases.

The model produces roughly 5-second clips at 540p resolution with 102 frames. Its key feature is motion control. Users can adjust motion strength to shift between subtle animation and more dynamic camera movement, which introduces a level of art direction missing in many text-to-video systems.

The TI2V evaluation benchmark includes 178 real-world and 120 anime-style prompt-image pairs, organized into categories of instruction adherence, subject and background consistency, and adherence to physical law.

However, the real consideration is control versus distortion. Because the model builds on an existing image, outputs must be tested for identity drift, texture inconsistency, and unintended motion artifacts.

For teams exploring image-to-video workflows, the priority should not be novelty, but alignment with how Chinese platforms integrate content and commerce, where visual output directly impacts conversion.

Step 3.5 Flash: A Sparse Language Model for Agents

Stepfun AI’s language models influence the overall platform. The Step 3.5 Flash model is a sparse mixture-of-experts language model that aims to deliver strong reasoning and agentic capabilities at lower computational cost.

The South China Morning Post reported that the model has 196 billion parameters but outperforms larger rivals from DeepSeek and Moonshot AI on reasoning benchmarks.

It also highlights that the model’s compact size and focus on reasoning were deliberate choices, and quotes Zhu Yibo as saying the team prioritised strong logical capability, an efficient context window, and fast speed.

For enterprises evaluating the StepFun app, it is useful to know that the underlying language model emphasises logic and speed, which may translate into faster response times compared with some large models.

StepFun App and Ecosystem

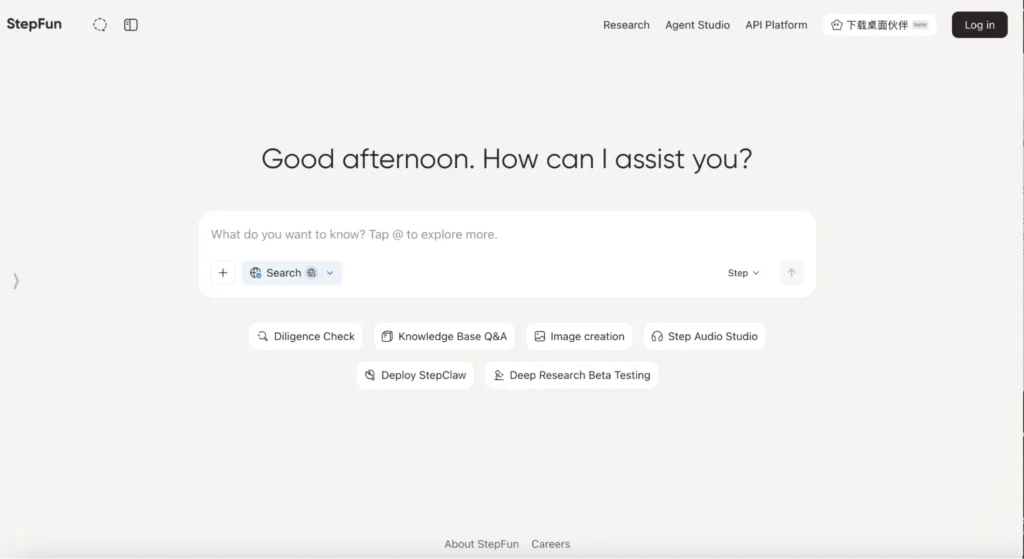

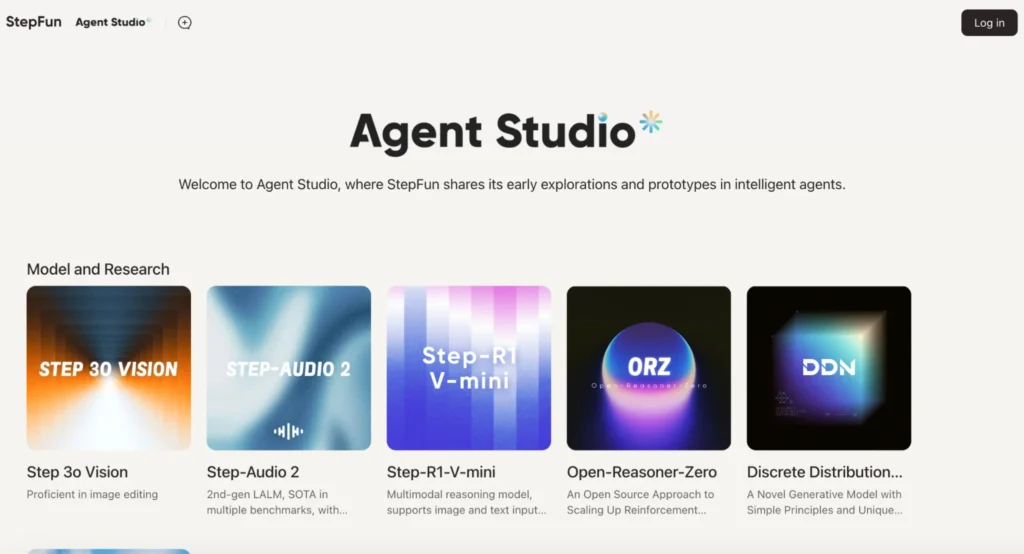

The StepFun app is the primary gateway for consumers and many business users. When visiting the site stepfun.ai, users are greeted by an agent interface that supports chat, web search, and creative tasks. The app integrates the Step 3.5 Flash language model, the Step Video models, and an audio model, enabling multimodal interaction.

The site promotes an “agent studio” where users can build early prototypes of autonomous agents. Because the app and the underlying models are open‑source, enterprises can choose to use the hosted services or deploy the models in their own environment.

The open‑source nature also enables integration with custom workflows via the Hugging Face Diffusers library.

The Commercial Strategy That Changes the Risk Profile

The fundraising numbers grab attention. In January 2026, Stepfun announced a B+ round exceeding RMB 5 billion (USD 717 million), surpassing the IPO proceeds of listed rivals Zhipu AI and MiniMax .

The round included state-backed investors like Shanghai State-owned Capital Investment and China Life Insurance, alongside returning shareholders Tencent and existing venture investors. But the money itself tells less than the strategic shift that accompanied it.

In the same month, Yin Qi formally took on the role of chairman. Yin co-founded Megvii, the computer vision company known as Face++, and serves as chairman of Afari, an automotive technology firm. His appointment signals a strategic pivot toward what Stepfun calls AI plus terminals.

The company’s internal positioning frames it not as China OpenAI but as closer to a combination of xAI and Tesla. This distinction matters for enterprise evaluation because it defines where Stepfun places its commercial bets.

Enterprise Evaluation Checklist

Selecting a generative video model for enterprise use involves more than watching marketing demos. The following checklist offers concrete questions and measures for evaluating StepFun AI’s video generation capabilities. Use it as a starting point and adapt it to your organisation’s use cases and risk tolerance.

Quality and Consistency

Quality testing should focus on the specific model family relevant to the use case. For video generation, the evaluation should use the Step Video T2V Eval categories rather than generic prompts.

- Test instruction adherence by checking whether generated actions match specified motions.

- Test subject and background consistency by running multiple generations from the same starting image.

- Test physical plausibility across object interactions, lighting, and collisions.

The open weights allow direct testing without commercial commitment.

Latency and Throughput

Latency measurement requires understanding the hardware trade-offs. The published inference times assume four GPUs with 80GB of memory. For on-device deployment, latency will vary based on the terminal hardware and quantization level. Enterprises should request performance data for their target deployment environment rather than assuming cloud benchmarks translate.

Cost Drivers

Cost drivers differ by deployment model. On-device licensing involves per-unit fees plus integration costs. Outcome-based pricing ties costs to usage volume. The company API model, while not the primary focus, offers a third path for enterprises unwilling to manage terminal deployment. Each model creates different cost structures and scaling characteristics.

Governance and safety

Governance requirements center on data handling and model control. The on-device deployment provides enterprises with maximum data sovereignty, as user inputs and outputs remain local. Cloud deployment shifts data handling responsibilities to Stepfun.

Enterprises should verify the specific data retention policies, model update mechanisms, and audit trails available in each deployment model.

5 Integration and extensibility

- APIs and SDKs – Determine how to integrate the model into existing workflows. Step Video T2V is compatible with the Hugging Face diffusers library, which simplifies integration with Python applications. The StepFun app provides a user interface but may not expose programmatic access.

- Custom fine-tuning – Investigate whether you can fine-tune the model on your own data. The report outlines a cascaded training pipeline with pre-training and fine-tuning stages. Fine-tuning can adapt the model to a specific domain vocabulary or visual style. Ensure that you have enough data and computing power for this process.

- Agent ecosystem – If you plan to build autonomous agents that use video generation as part of a larger workflow, evaluate the agent studio. Step 3.5 Flash emphasises reasoning and agentic capabilities. Check whether the video model can be called as a tool from within an agent script.

Video Generation Evaluation: Style Control, Consistency and Safety

Evaluating a generative video model requires more than visual inspection. The goal is to measure whether outputs remain consistent, controllable, and usable under real production conditions.

1. Semantic Alignment

Start by testing whether outputs follow instructions precisely. Prompts should include specific actions, objects, and scene constraints. Then compare outputs against expected behavior.

Use structured scoring where possible. Metrics like CLIP similarity can help, but human review remains essential for brand-specific accuracy.

2. Temporal Consistency

Next, assess how the video behaves over time. Look for flickering, object instability, or unnatural frame transitions.

Pay attention to motion continuity. Camera movement, object interaction, and scene physics should remain coherent from start to finish.

3. Style and Identity Control

For commercial use, consistency matters more than creativity. Test whether the model can reproduce the same subject, product, or visual style across multiple generations.

In TI2V workflows, this becomes critical. Even small deviations in color, shape, or structure can break brand alignment.

4. Physical Plausibility

Check whether generated scenes follow basic physical logic. Lighting, shadows, collisions, and gravity should behave predictably.

Failures here often signal deeper model limitations that will appear more frequently at scale.

5. Safety and Misuse Risk

Finally, evaluate how the model handles sensitive or ambiguous prompts. Test edge cases involving realistic scenes, people, or branded environments.

The objective is not just filtering outputs. It is understanding where the model may produce misleading or high-risk content.

Pilot Plan: What to Test First

Launching a new generative video capability involves iterative testing. A well-structured pilot can reveal strengths and weaknesses before full deployment.

- Define success criteria – Determine what success looks like. For example, a marketing team might define success as creating short clips that align with the brand’s aesthetics and narrative. A training department might require instructional videos with a clear visual flow.

- Start with simple prompts – Begin with prompts describing basic scenes or product showcases. Evaluate resolution, motion, and consistency. Use the Step Video T2V online demo through Yuewen to gauge baseline quality. Record generation time and GPU usage.

- Introduce brand elements – Test prompts that include your company name, logo, or mascot. In an image-to-video scenario, supply a reference image to the TI2V model. Look for fidelity across frames and whether the model adds unwanted distortions.

- Experiment with styles and motion – Use descriptive adjectives to specify lighting, mood, and camera perspective. For TI2V, vary the motion strength slider. Document which settings produce the most appealing results.

- Assess safety and compliance – Include prompts that could produce sensitive content, such as depictions of people in professional settings. Observe whether the model avoids inappropriate imagery. Verify that copyrighted material is not reproduced.

- Gather feedback – Collect comments from stakeholders who represent target audiences. Ask about clarity, realism, and emotional impact. Combine subjective feedback with objective metrics, such as the CLIP score.

- Iterate and fine-tune – Based on pilot results, decide whether to fine-tune the model on your own dataset or adjust prompts. If the model meets requirements, move to integration and scale testing.

Turning Insight Into Action

Understanding tools like StepFun AI is only useful if you know how to apply them inside real business systems. Most teams struggle at this stage. The gap is not technology. The gap is execution.

That is where ChoZan operates.

ChoZan is a China-focused digital transformation consultancy that works with global brands to translate China’s fastest-moving trends into practical strategies. The focus stays on how things actually work in the market, not on theory.

Our work typically includes:

- Market research and consumer insight grounded in real platform behavior

- Strategy development based on China’s digital ecosystems and commerce models

- Digital transformation consulting for AI, social commerce, and content systems

- Executive workshops, training, and China learning expeditions for leadership teams

If you are evaluating StepFun AI video, multimodal models, or AI-driven content systems, the real question is not what the model can do. The question is how it fits into your growth strategy.

You can book a consultation with ChoZan to explore your specific use case and define what will actually drive results in your business.

FAQs: Stepfun AI, Video Models, and Enterprise Use

1. How does Stepfun AI handle prompt sensitivity compared to other video models?

Stepfun AI tends to respond strongly to prompt phrasing. Small wording changes can shift camera angle, motion, or scene composition. Teams should standardize prompt templates early to avoid inconsistent outputs across campaigns or production workflows.

2. Can Stepfun AI generate videos suitable for paid advertising campaigns?

Yes, but only after refinement. Raw outputs often lack brand alignment. You need post-production editing, brand overlays, and script control. Treat it as a creative generator, not a finished ad production tool.

3. What are the biggest failure cases in Stepfun AI video generation?

It struggles with complex interactions, like multiple characters or precise object handling. Motion can break in longer sequences. You may also see inconsistencies in faces, logos, or product shapes across frames.

4. How does Stepfun AI perform with product-focused video content?

It works best for conceptual visuals, not exact product replication. If your use case requires pixel-accurate representation, you will need reference images and heavy iteration. Even then, results need manual validation.

5. Is Stepfun AI suitable for internal enterprise content creation?

Yes, especially for training visuals, concept videos, and internal storytelling. The quality is often more than sufficient for internal use, where speed and cost efficiency matter more than perfect visual realism.

6. How much prompt engineering effort is required to get usable results?

More than most teams expect. You will need structured prompts, iteration cycles, and internal guidelines. Without this, output quality varies too much to support consistent business use.

7. Can Stepfun AI support multilingual video generation workflows?

Yes. It handles Chinese and English prompts well, but the output style may differ slightly depending on the language. Teams should test both versions and align prompts to maintain a consistent visual direction across markets.

8. What role does human editing play after video generation?

It is essential. Generated videos often require trimming, correction, and enhancement. Most companies integrate AI output into existing editing pipelines rather than replacing editors entirely.

9. How does Stepfun AI fit into a broader content production system?

It works best as a front-end ideation and prototyping tool. You generate concepts quickly, test variations, and then move selected outputs into structured production workflows for refinement and scaling.

10. What teams inside a company benefit most from Stepfun AI?

Marketing, content, and product teams see the most value. Strategy teams can also use it for rapid visualization. Engineering teams benefit less unless they are directly integrating or customizing the models.

11. How should companies measure ROI when using Stepfun AI video tools?

Focus on speed, cost savings, and creative output volume. Track how many concepts you can test per week and how quickly campaigns move from idea to execution. Do not rely only on visual quality as a metric.

12. What is the biggest mistake companies make when adopting Stepfun AI?

They expect production-ready output from day one. This leads to disappointment. The real value comes from building workflows around the tool, not using it as a plug-and-play replacement for existing systems.

By subscribing to Ashley Dudarenok’s China Newsletter, you’ll join a global community of professionals who rely on her insights to navigate the complexities of China’s dynamic market.

Don’t miss out—subscribe today and start learning for China and from China!

Ashley Dudarenok is a leading expert on China’s digital economy, a serial entrepreneur, and the author of 11 books on digital China. Recognized by Thinkers50 as a “Guru on fast-evolving trends in China” and named one of the world’s top 30 internet marketers by Global Gurus, Ashley is a trailblazer in helping global businesses navigate and succeed in one of the world’s most dynamic markets.

She is the founder of ChoZan 超赞, a consultancy specializing in China research and digital transformation, and Alarice, a digital marketing agency that helps international brands grow in China. Through research, consulting, and bespoke learning expeditions, Ashley and her team empower the world’s top companies to learn from China’s unparalleled innovation and apply these insights to their global strategies.

A sought-after keynote speaker, Ashley has delivered tailored presentations on customer centricity, the future of retail, and technology-driven transformation for leading brands like Coca-Cola, Disney, and 3M. Her expertise has been featured in major media outlets, including the BBC, Forbes, Bloomberg, and SCMP, making her one of the most recognized voices on China’s digital landscape.

With over 500,000 followers across platforms like LinkedIn and YouTube, Ashley shares daily insights into China’s cutting-edge consumer trends and digital innovation, inspiring professionals worldwide to think bigger, adapt faster, and innovate smarter.